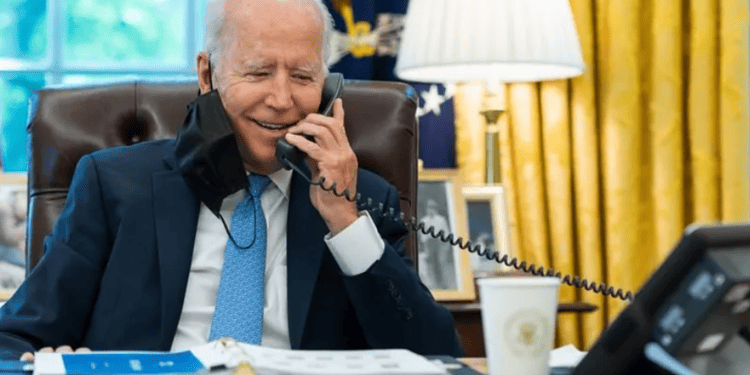

Last month, a recorded message delivered a seemingly urgent plea to potential voters ahead of a New Hampshire Democratic primary election: “It’s important that you save your vote for the November election.” The voice closely resembled the President, but it wasn’t Joe Biden; rather, it was likely a sophisticated AI clone.

This incident has heightened concerns about the escalating capabilities of AI-powered audio manipulation, a worry that became more apparent when I approached a cybersecurity company for insights on the matter. In a call with a representative from Secureworks, it was revealed that what I thought was a genuine conversation turned out to be a demonstration of an AI system capable of calling and responding to my reactions, even attempting to imitate the representative’s voice.

Using a freely available commercial platform that claims the ability to make “millions” of phone calls per day using human-sounding AI agents, Secureworks showcased the potential of this technology. While not flawless, the AI’s conversational abilities were impressive.

The concern raised by Mr. Pilling from Secureworks lies in the rapid deployment of thousands of such conversational AIs, presenting a significant and worrisome development from a security perspective. Voice cloning, he notes, is an additional layer of complexity in this landscape.

The potential misuse of AI in phone scams is highlighted, as these systems could replace the need for extensive manual labor in running call centers or engaging in time-consuming phone-based scams. The efficiency and scalability introduced by AI technologies, as observed in this context, underscore the broader impact AI has on various operations.

As major elections approach in the UK, US, and India, worries intensify about the use of audio deepfakes, sophisticated fake voices created by AI, to spread misinformation with the aim of manipulating democratic outcomes. The challenge in verifying audio deepfakes, compared to AI-generated images, adds an additional layer of complexity.

Misinformation expert Lorena Martinez emphasizes the urgency for social media firms to strengthen their disinformation-fighting teams, and she calls on developers of voice cloning tech to anticipate potential misuse before launching their tools.

While the UK’s Electoral Commission expresses clear concerns about the trustworthiness of information during elections, Sam Jeffers, co-founder of Who Targets Me, urges caution against excessive cynicism. He emphasizes the robustness of democratic processes and warns against inadvertently causing people to lose faith in reliable information due to the threat of deepfakes.

The need to strike a balance between vigilance and trust in information remains paramount as the impact of AI technologies continues to unfold in the realm of elections and misinformation.